The Problem

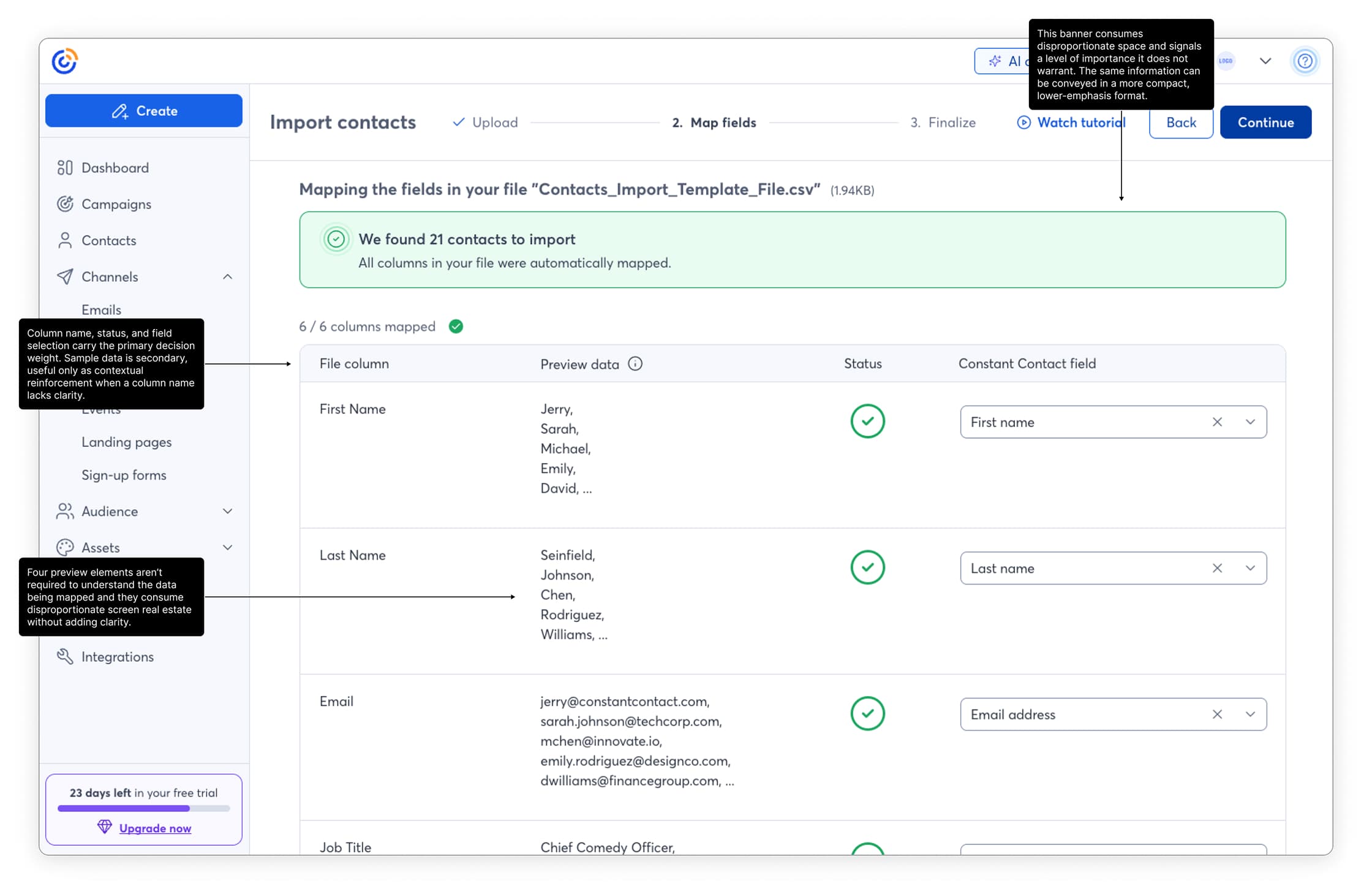

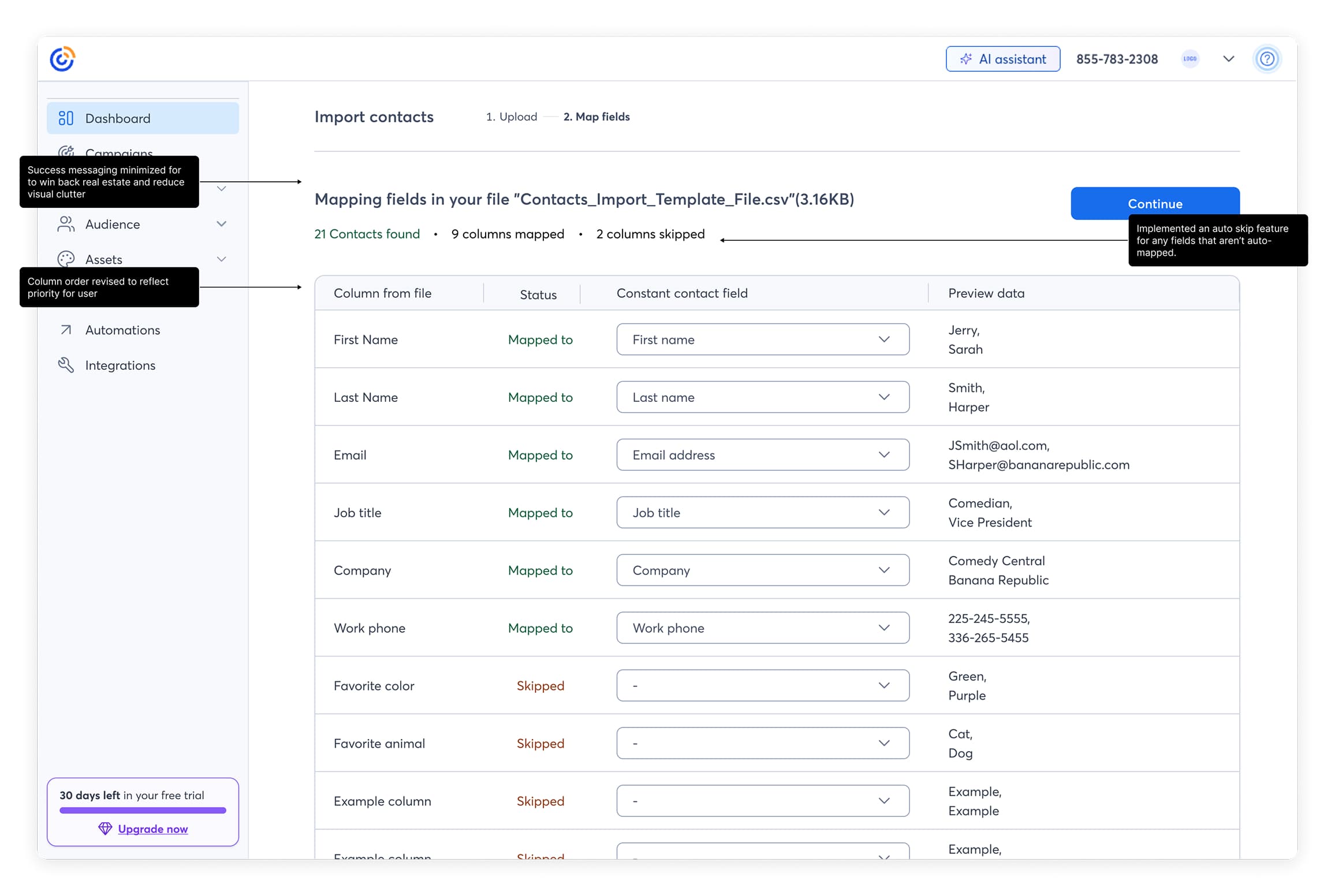

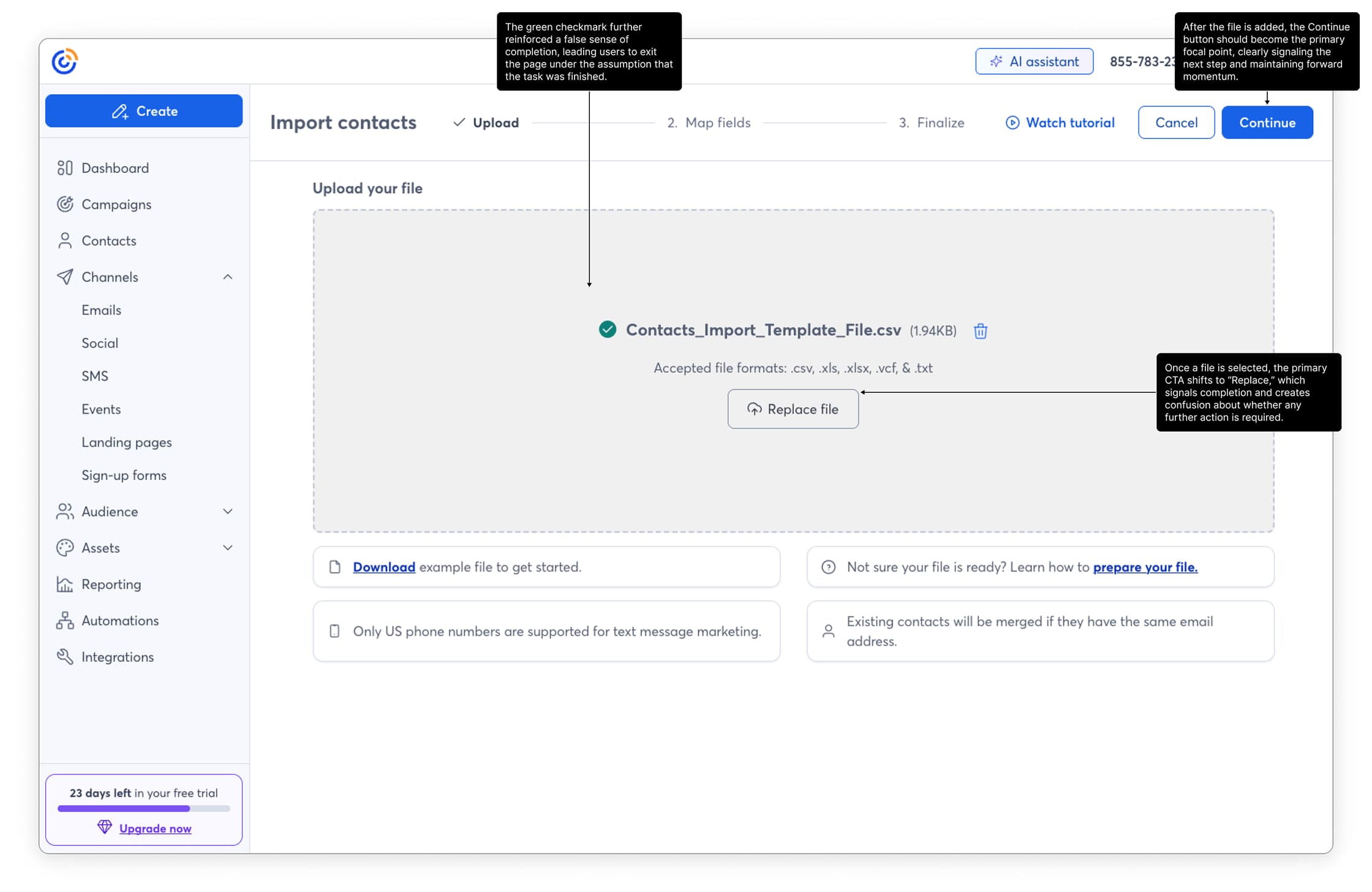

Analytics showed drop-off at every stage, but the most damaging issue was a false signal. After selecting a file, a green checkmark appeared on screen. To users, that looked like confirmation the upload was complete. Many closed the browser and moved on, never knowing they still had mapping and consent steps ahead. The flow had an invisible exit built into it.

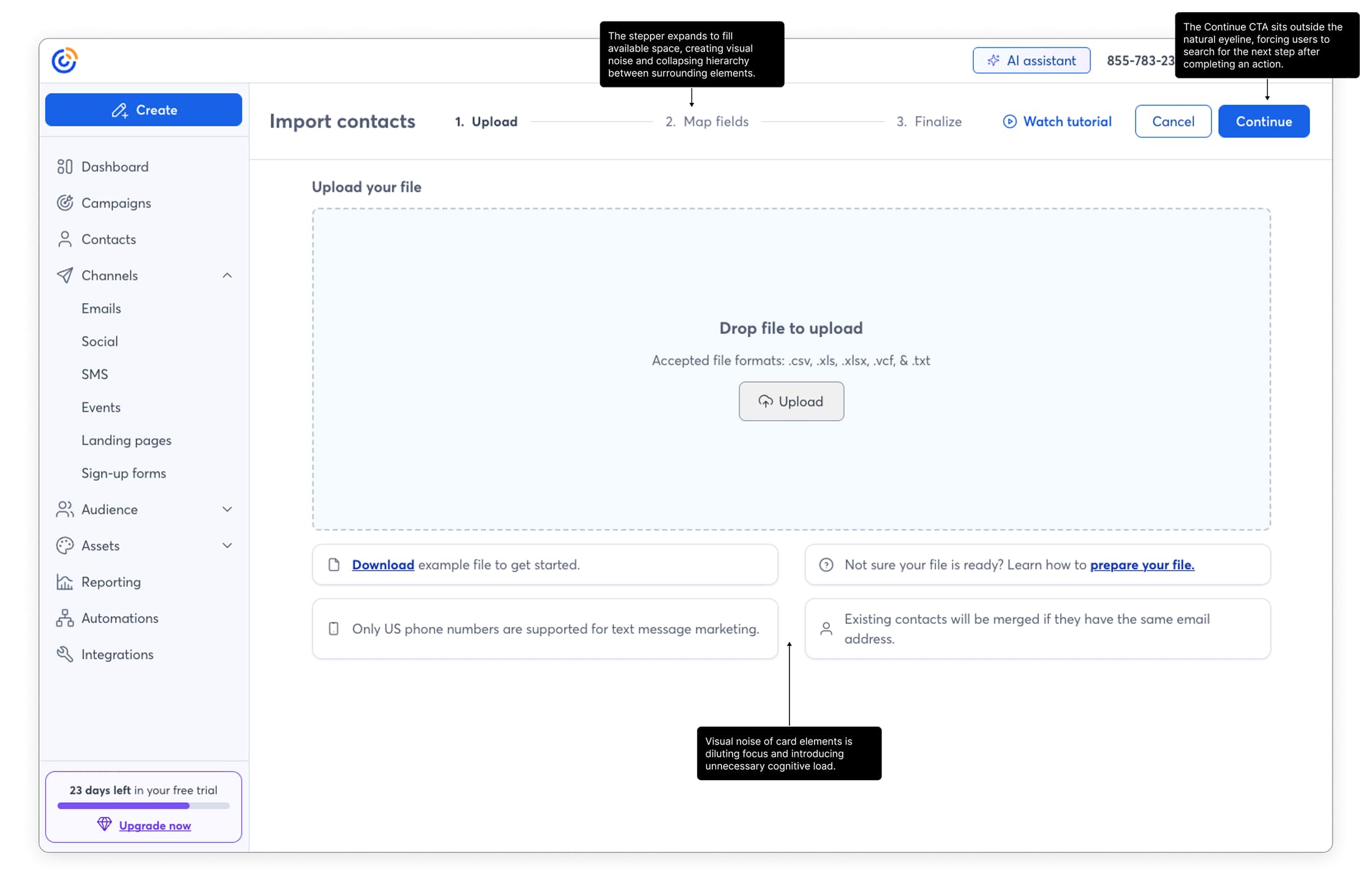

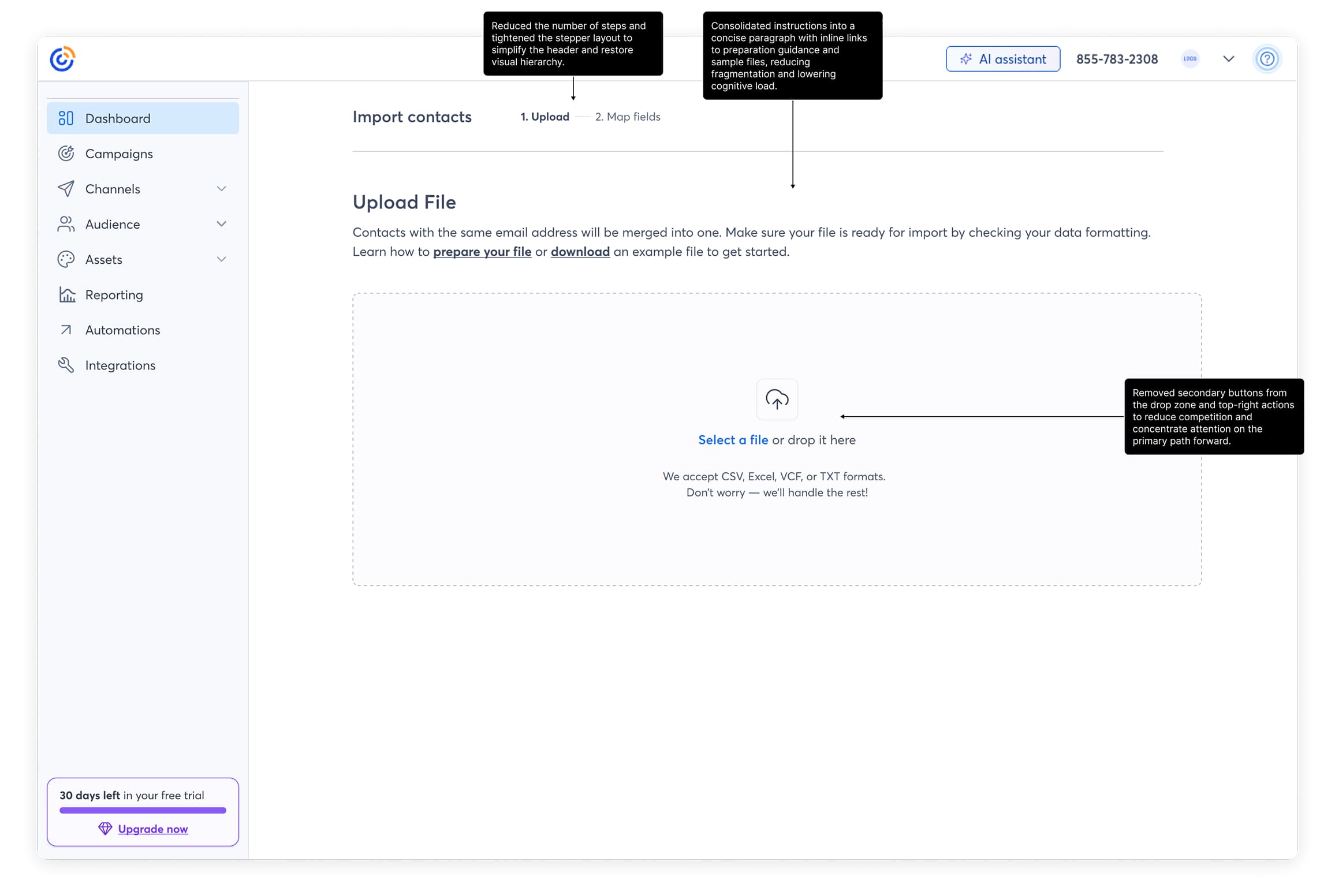

Beyond the false completion signal, the mapping interface was visually noisy and hard to parse. Help content was scattered rather than surfaced where it was needed. The consent step appeared as a blocking interruption that broke forward momentum. Error states were silent. Each issue was individually minor. Together, they made completing the flow feel like work.

Redesigning the Upload Flow

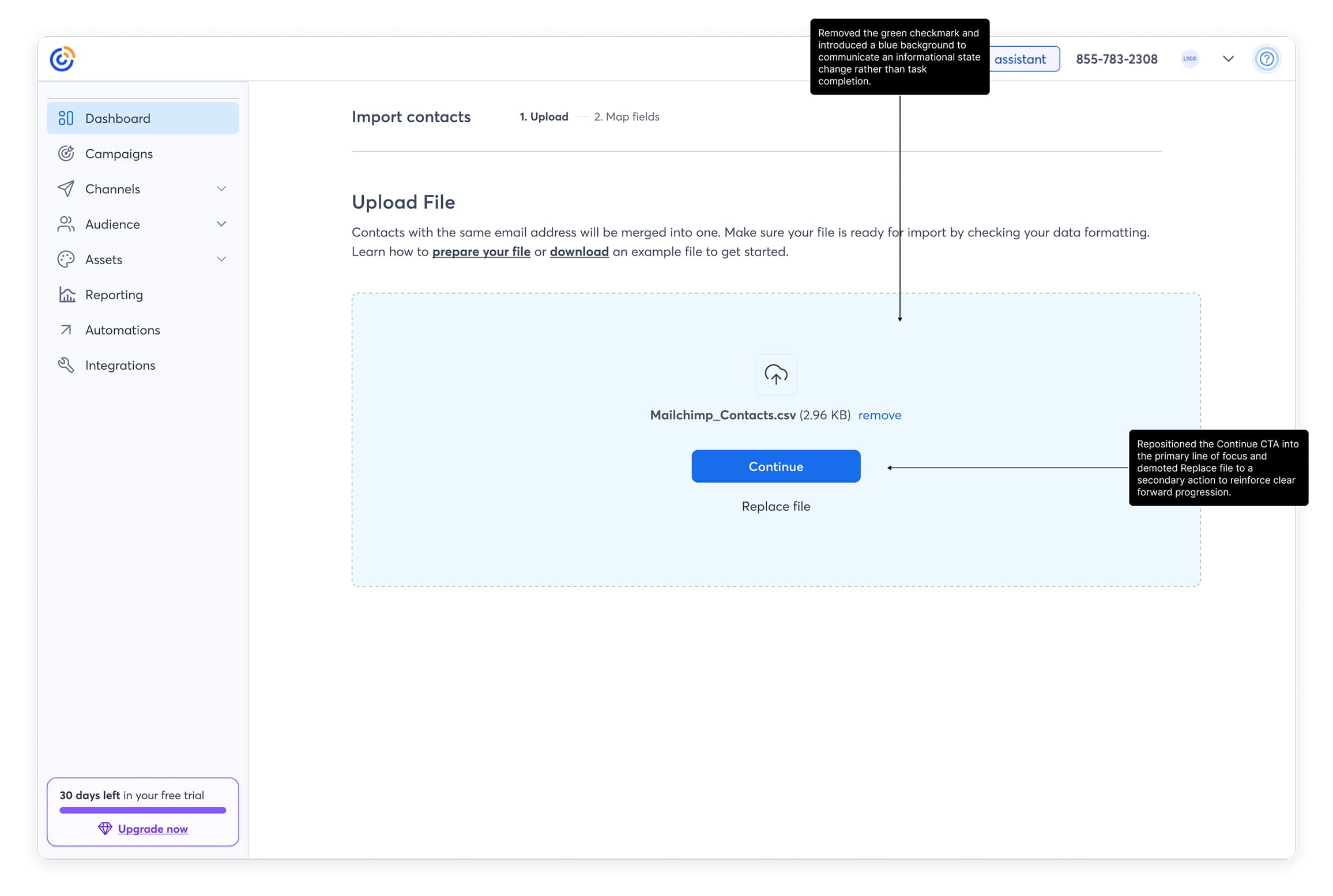

The redesign stayed within the existing architecture. I removed the false completion signal and replaced it with progressive disclosure that made the next step explicit. Primary actions after file selection became more visually prominent. The field mapping interface was simplified for clearer column relationships. Help documentation moved from scattered placements to contextual inline guidance. Consent became an inline modal instead of a blocking step. Error states were rewritten with clear explanations and recovery paths.

None of these were large surface changes. The bet was that the cumulative effect of removing small friction at every step would meaningfully improve completion, and that the false completion signal alone was doing enough damage to move the primary metric.